Overview

Architecture and Core Technologies

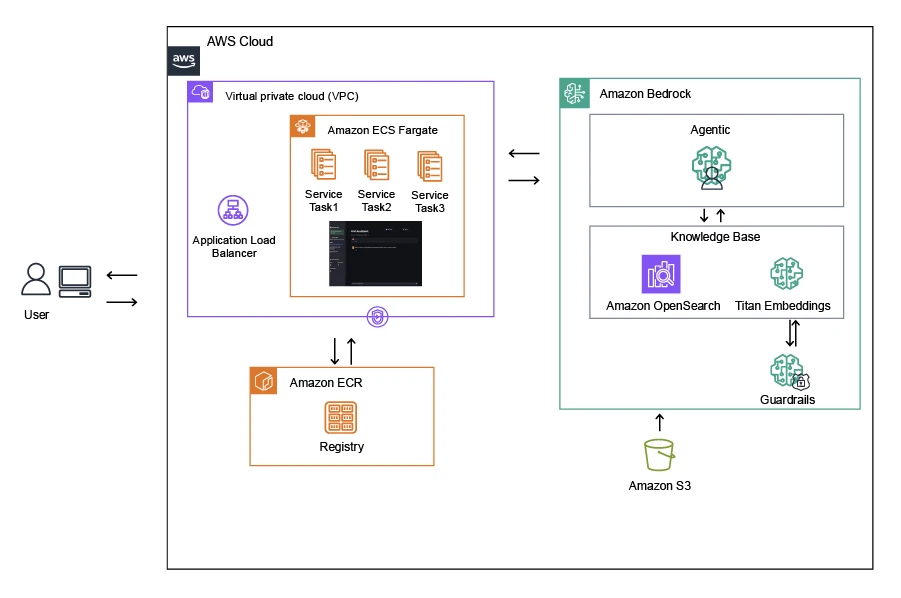

The AI Assistant Solution supports a broad set of knowledge-intensive scenarios where teams need fast, trustworthy answers. AWS Partners can quickly retrieve service details, reference architectures, and migration playbooks, while enterprise users can query SOPs, compliance policies, and product documentation in natural language. By running on Amazon Bedrock’s managed foundation models, the solution removes the heavy lifting of model hosting and tuning so customers can focus on delivering value, not maintaining ML stacks. Reliance on fully managed AWS services—including Bedrock, Amazon ECS Fargate, and Amazon OpenSearch Service—drives cost efficiency, operational resilience, and enterprise-grade security, fully aligned with the AWS shared responsibility model.

The AI Assistant Solution is built for AWS Partners, Managed Service Providers (MSPs), SMBs, and large enterprises seeking to embed Generative AI into daily workflows. Engagements typically start with a discovery workshop to map data sources and access patterns, followed by provisioning of a dedicated OpenSearch knowledge base and Bedrock agent. Delivery options include SaaS, private-tenant deployments, and partner co-branding. Users access the assistant via a secure web portal or API, enabling rapid, reliable knowledge retrieval across teams. Partner-Led Support is available under AWS guidelines to provide proactive monitoring and continuous availability.

Security and scale are foundational to the AI Assistant Solution. Data is encrypted in transit and at rest, with a multi-AZ architecture for high availability. Fargate auto-scaling adjusts capacity to traffic patterns without manual intervention. Access is governed through AWS IAM and optional integration with AWS IAM Identity Center (SSO). Service health and performance are continuously tracked with Amazon CloudWatch, and the environment is operated against AWS Well-Architected best practices for reliability, cost optimization, and operational excellence.

The AI Assistant Solution is provided as a provisioned and configurable service that runs within the customer’s AWS environment. Deployment can be performed in any AWS Region where Amazon Bedrock is available, with flexible setup options that align to each customer’s architecture and security requirements. The SiS Team assists in provisioning the infrastructure, configuring Bedrock models and OpenSearch knowledge bases, and ensuring that the solution operates securely and efficiently on AWS. Customers can request deployment through a service engagement or integrate the AI Assistant into their existing workloads using API endpoints or a web interface. Detailed documentation, onboarding materials, and operational guidelines are provided to help internal teams manage and extend the solution independently.